As humans, we spend most of our day interacting with user interfaces (UIs). The primary interface we use on a daily basis, maybe more like every few minutes: our phones. Smart phones and their touchscreen interfaces have informed the way we expect other devices to work. We have a new set of default expectations and it’s easy to be disappointed if the UI is not easy to use.

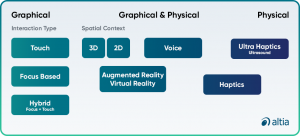

In a past blog post, Exploring the UI Universe: Different Types of UI, we defined UI and identified four common types of user interfaces. While that content remains true, new technology comes with new categories and considerations. In this article, we’re going to share updated types of UI that fall under the umbrellas of graphical user interfaces (GUIs), physical user interfaces and hybrid models.

Our end-users expect UIs to be intuitive and efficient. As engineers or designers, our UI needs to work for the end-user, no matter the device. When we are designing user interfaces, we also need to design for user experience (UX). UI informs UX and in turn, UX informs UI. We want the user to actually enjoy using the product. Not only does the UI need to look good, but the interaction needs to make the end-user feel productive and successful.

Touch UI

Touch screens have overtaken thousands, if not millions of products and UIs at this point. However, the UX has become essential for these user interfaces. For nurses and medical professionals, touch screens have started to replace buttons and knobs.

Most ventilator user interfaces are now constructed with touch screens, with highly thought-out and rigorously tested safety modes. For example, placing critical buttons on the bottom of the screen for quick accessibility, asking twice to confirm a command or requiring the user to hold down a button on the screen to execute that command.

Not only are the actual touch screen buttons important, but colors are even more critical in medical situations. Nurses and doctors need to quickly look at a medical device screen and recognize what is going on based on color. Blood pressure is red, oxygen saturation is blue and when a patient takes a breath, it displays in green. These screens need to be able to convey important information at a glance so the user can react quickly by pressing the correct command.

Focus-based UI

When we think of focus-based UIs, a computer screen typically comes to mind. The user will use a mouse or cursor to interact with the product and its screen. The cursor shows the user the current position for the user interaction, allowing for the user to make an action and the device will respond from that input.

No matter the device, the cursor or pointer need to be large enough to be seen—no squinting required. It also needs to contrast the background on the various screens so it doesn’t get lost to the naked eye. What we’re seeing more and more with focus-based interfaces is a hybrid of a cursor with touch, so that the user can user their finger to move the cursor or pointer.

2D and 3D UIs

In the 2D world of a digital display, 3D user interfaces add additional context and detail. For example, in a high-end automotive cluster, the 3D status car will inform the driver about which tire has low pressure or which door is still open, truly optimizing ease and efficiency. Altia allows developers to integrate 2D and 3D graphics for custom embedded displays.

For the medical industry, while ultrasounds are still in 2D, a 3D view of a patient will inform a doctor where to place sensors or see an organ from a different vantage point. Cardiovascular surgeons can utilize 3D technology not only to build 3D hearts, but to also see internal views of the heart and potential defects—ultimately saving lives with the addition of a visual UI advancement.

Augmented Reality and Virtual Reality

We are seeing augmented reality (AR) and virtual reality (VR) technology rapidly evolve and become mainstream out of necessity, especially in educational settings and healthcare. While these terms are super trendy and usually grouped together, it’s important to know how AR and VR are different. AR enhances what you see in real life with additional information and graphics while VR creates a different digital environment that completely replaces what the user is seeing as their ‘real-world’.

Healthcare is going to become more visual, and GUIs will make that happen. From learning situations to life-saving surgeries, AR and VR can be implemented to elevate the experience.

Surgeries are already happening laparoscopically with the doctor sitting at a desk with a screen—AR would enhance not only the UX, but also the visibility. All the while, medical devices need to be safe, accurate and easy to use in critical situations. Not only will AR/VR be valuable for medical devices, they will also be critical in head-up displays in cars, trucks, SUVs and even off-highway vehicles.

Voice UI

Voice interfaces allow the user to interact with a product through speech or verbal commands. “Hey Siri” and “Hey Alexa” have become well-known phrases for most people, even if they don’t own one of these virtual assistants. Rattling off a grocery list, requesting a new song, asking for reminders—all user interactions based on voice and executed when the need is top of mind.

Most commonly, we see voice interfaces as both graphical and physical UIs due to the common use of a teleprompter UI to give the user feedback and prompts. A voice interface is a true result of UX/UI design because it needs to hear and understand the voice command then complete the action. We’re seeing voice UX more than ever before and there’s no stopping now.

Haptics and Ultrahaptics

Haptics are technologies that create an experience that the user can physically feel by engaging the sense of touch—think buzzing or vibration when you enter your password incorrectly or your fingerprint doesn’t land just right to unlock your device. Haptics provide the user with a clear sense of success or failure during the interaction.

For the automotive industry, haptics can allow for increased engagement with the vehicle without sacrificing safety. If the driver can feel a notification and react quickly (even with voice or gestures), rather than look away from the road, everyone is safer all around. Haptics will allow your user to engage with your product in a way they can feel, rather than see.

Ultrahaptics allows for interactions with objects mid-air using ultrasound that reflects air pressure waves off a user’s hand. Products with ultrahaptic UIs create the illusion that end-users are actually feeling objects mid-air and allowing them to interact with an object on a screen.

Hybrid models

What you’ll start seeing more and more are hybrid user interface that including a combination of modalities and interactions. While voice can work when a user is alone and somewhere quiet, gesture or touch interfaces are more convenient and less intrusive in a public setting. Moreover, on a construction site, the user may not be able to utilize voice, but they can touch a screen to flip through the digital construction blueprints. For the medical industry, if a medical professional is wearing gloves, gesture-based or voice user interfaces can be a better option.

Not only can graphical interfaces be easy to use and intuitive, but these types of interfaces—especially touch and haptic UIs—are also what end-user expect. Hybrid UIs that include touch and 3D features can be product differentiators that can not only impress your end-user, but ultimately facilitate an incredible user experience.

Conclusion

Ultimately, you want to strike a balance between innovation and function. While the idea of flashy and highly detailed graphics on your UI sound great in a brainstorm session, sometimes the simpler, the better. You want to design a user interface that allows an end-user to intuitively get the job done, whether that’s conducting a complicated medical procedure or starting a washing machine.

The future of UI is incredibly exciting. Innovations that turn common surfaces, like an automotive armrest into a UI control are currently in the works. Technologies like conductive thread, mechatronics and smart materials will allow companies developing UIs to create futuristic user experiences.

With Altia’s model-based development approach, you gain the power to create a custom user interface model in Altia Design, our GUI editor. These models enable clear communication, fast feedback and iterations for your UI design. Altia’s software allows for testing with users in real-life use cases throughout your development process—both in a runtime environment and on test hardware—to ensure that your UI’s UX meets your end-users’ expectations for performance, features and ease of use. Once your GUI is perfected, Altia DeepScreen generates optimized, certifiable C code for your production hardware so that you get your best UX from concept to production quickly, efficiently and successfully.

From graphic design optimized for UX to prototypes and testing, we can do it all. Contact us for a free demo today!